Organizations spend real money on penetration testing and, too often, walk away with the same vulnerabilities they started with. The test happened. The report landed. The checkbox got checked. Nothing significant has changed.

Chris Blow, director, cyber intelligence and adversarial operations at a Fortune 100 international insurance company, has seen this pattern enough times to finish the sentence before the story starts. Too many organizations approach pen testing with the attitude that they want their compliance problem solved, he says. "I want my report so I can get my checkbox," he says. Then they will see the pen tester again the next year. While the compliance motivation isn't inherently wrong, when compliance is the only driver, the work is performative by design.

Organizations, when they do act on remediation, often take the report cataloging findings and assign someone to fix the flagged issue.

"The problem is they're going to go and fix the one thing, but that one vulnerability in one place, but that vulnerability exists in 10,000 other places within their environment. "Great, you put a finger in the dike," he says.

The finger-in-the-dike framing is apt. As we covered in a story last month, Cobalt's 2026 State of Pentesting Report, which draws on five years of real-world pentest data, puts the cumulative resolution rate at just 52%, even as the typical organization resolves 86% of its most recent high-risk findings.

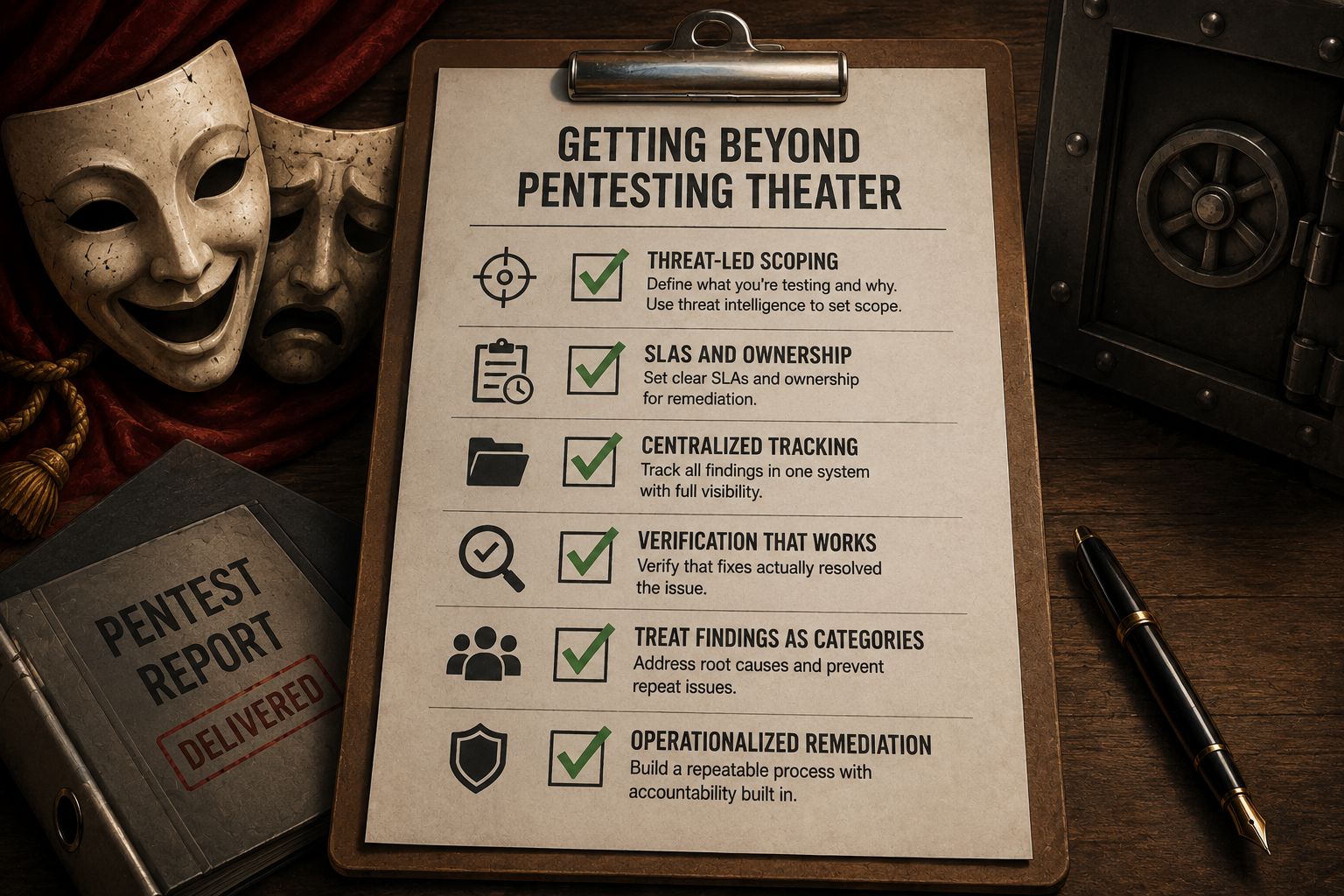

Getting beyond pentesting theater

What organizations need to succeed and get beyond "pentesting theater" isn't complicated: following a pentest, they need a vulnerability management remediation effort operationalized with accountability built in. That's a program with SLAs, centralized tracking, verification that fixes actually closed the issue, and, critically, the organizational reflex to treat a finding as a category, not a one-off. "If you don't have that foundation," he says, "bringing in a pen testing team does you zero good."

Lack of scope compounds the challenge. Organizations that can't answer "what are we testing and why" before the engagement starts are almost certainly wasting resources. The question isn't whether you have crown jewels worth protecting; it's whether you've thought through everything adjacent to them that an attacker would happily pilfer while you're protecting the vault. These can be credentials sitting in SharePoint documents. Sales reps without password managers are stuffing credentials into OneNote.

Regulatory frameworks like DORA at least attempt to force the scoping conversation. DORA's requirement for threat-led pen testing and conducting threat intelligence work before the engagement begins forces a structure that random, annual assessments don't. The difference shows up in how organizations respond to active threat intelligence. For instance, when Scattered Spider was aggressively targeting Okta integrations, mature programs were already mapping which web apps in their environment relied on Okta for authentication and scoping tests accordingly. Organizations without that threat context were testing whatever they had tested previously.

The AI dimension adds urgency to the foundation question. Cobalt's recent survey data shows that only 38% AI found vulnerabilities have a resolution rate. That gap between discovery and remediation is exactly the problem Blow describes — a pen test finding that reveals a category of AI security risk means nothing if the organization lacks the process to operationalize the response.

The answer isn't another pen test. It's building the remediation infrastructure that makes a pen test worth commissioning in the first place: defined SLAs, vulnerabilities treated as class problems, verified fixes, and scope determined by threat intelligence rather than last year's audit calendar. Without that foundation, the test is a ceremony. The adversary doesn't care about the report.