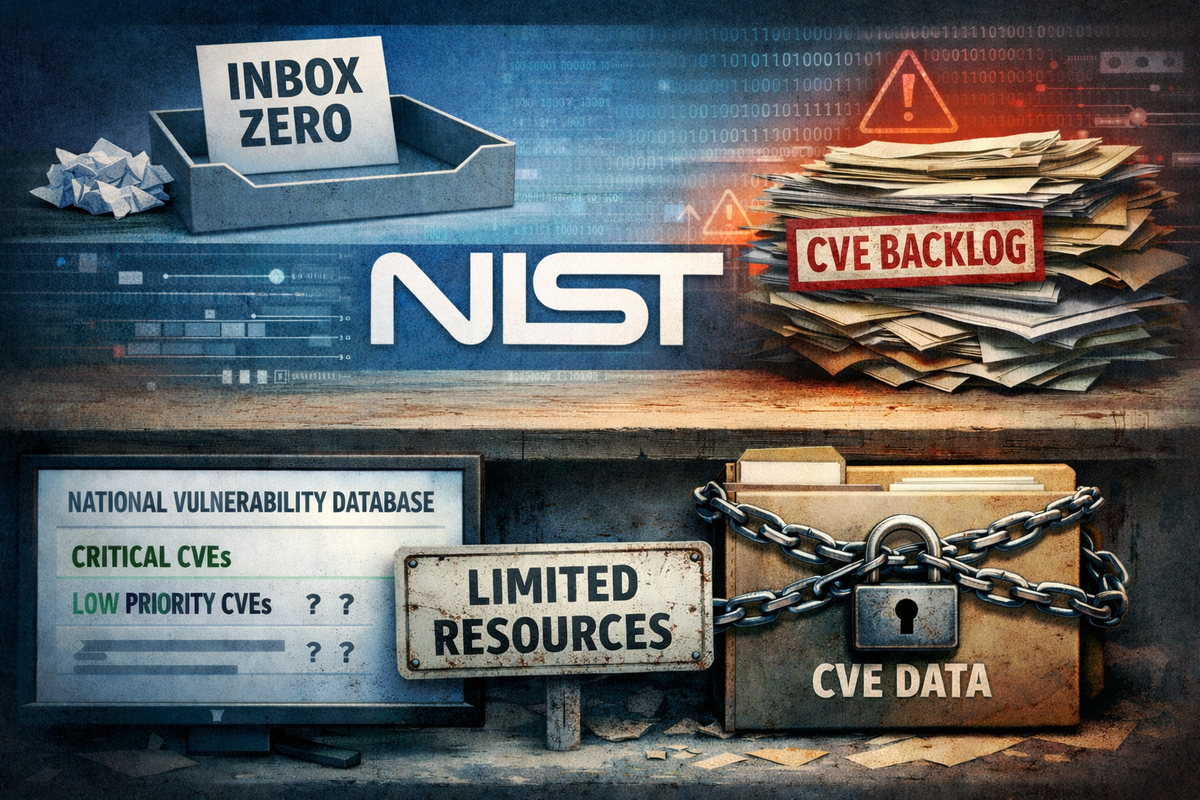

"The Vulnpocalypse" is a catchy name, if a bit hyperbolic, and it follows the celebrity-naming model of vulnerabilities: Heartbleed, Shellshock, POODLE (man, 2014 was a doozy). Soon, we will be overwhelmed by a deluge of vulnerabilities as AI discovers and discloses them across the board; adversaries will build exploits—using AI, even before the vulnerabilities can be categorized into a CVE. If the CVE system even survives.

Perhaps that will usher in an era of secure-by-AI code, where software never again has vulnerabilities. If you believe that will happen any time soon, I have a bridge in Arizona to sell you. But that is neither here nor there.

Here is the reality for CISOs: the Vulnpocalypse is likely real, even if it ends up being a slow-motion catastrophe. But it is not your problem. You can be excused for thinking otherwise: 16% of exhibitors at RSAC 2026 certainly think it is, as did 29% of BlackHat 2025 exhibitors. Your peers turn to you to prioritize the software defects they aren't racing to fix. The CEO and the Board both expect you to report on vulnerability management. The entire ecosystem behaves as if it is your problem.

But it’s not.

Imagine, for a moment, an F-16 pilot. They fly one of the world’s most advanced aircraft. They have a crew chief responsible for the care and maintenance of that airplane; nothing touches it except under the chief's supervision. The crew chief follows reams of technical manuals published by the US Air Force—notably the F-16 System Program Office and the Ogden Air Logistics Complex—using content provided by the manufacturer, Lockheed Martin.

That is it. If you want to change, improve, or fix an F-16, the directive comes from the people who build F-16s, the people who buy F-16s, and the people who maintain F-16s. If there is a flaw in an F-16, regardless of who identifies it, the fix is always the responsibility of the development and procurement supply chain.

But that isn’t the case for software. Developers write software—sometimes manually, sometimes by incorporating dependencies, and increasingly by using AI—and then deploy it. As long as it functions, they often ignore anything that isn't a feature request. Patch management, upgrading dependencies, and general software maintenance are sidelined in the race to deploy more features. Vulnerabilities accumulate. Left alone, this gap would have reached a breaking point long ago.

However, somewhere along the way, security teams stepped into the breach and took accountability for vulnerability management. Software teams were happy to let them. Uncomfortable conversations moved to rooms that didn't include engineers, and security teams became easy to ignore—or worse, to "nerdsnipe" with questions like, "What is the exact prioritization order for these 197 vulnerabilities?" Decades have passed while security energy has been consumed by finding, explaining, and cataloging an increasingly large number of software defects. But the energy required to fix them is missing—because security teams do not fix software bugs.

The Vulnpocalypse is a problem. But it’s the problem of the CIO, the CTO, the head of Engineering. As long as the CISO stands in front of the runaway train, though, no one is going to stop it.