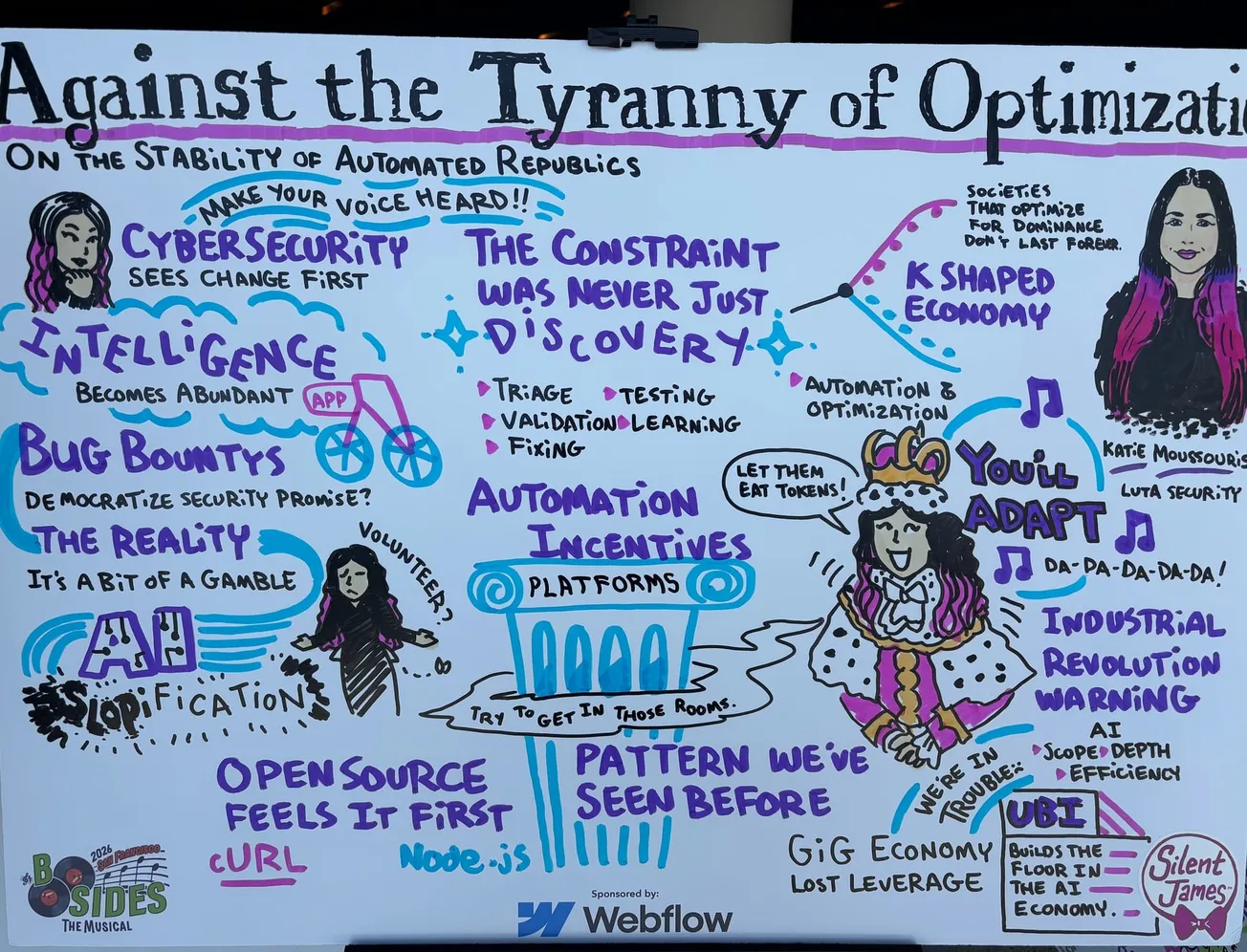

At BSides San Francisco 2026, my friend Katie Moussouris delivered a warning: Optimization itself is becoming a form of tyranny that threatens to upend and destroys livelihoods.

Up to this point, automation has been framed as augmentation: tools to help researchers move faster, triage more findings and scale programs with rushing speed. But Moussouris described something different – automation entering the same arena as humans and winning.

For example: Bug bounty programs, once a proving ground for human ingenuity, are becoming testbeds for machine-driven discovery at scale. For the first time, we're seeing automation directly compete with human labor in bug bounty markets.

Cybersecurity Is The Canary In The Coal Mine

Moussouris noted that cybersecurity is seeing this transformation earlier than most industries.

We’re already seeing it with automated vulnerability discovery pipelines, AI-assisted exploit development, machine-generated findings flooding triage queues, and signal-to-noise problems that are accelerating beyond human capacity

Security teams are quickly shifting from defending systems to defending their ability to interpret reality.

The Tyranny of Optimization

Moussouris framed this shift as power accumulating wherever optimization wins.

Historically, philosophers imagined tyranny in terms of kings, armies, and governments. They didn't imagine dashboards and systems where decisions are made faster than institutions can respond, driven by efficiency metrics rather than human judgment, and continuously tuned for output, scale, and speed.

This is the “tyranny of optimization.”

When Intelligence Becomes Abundant

In bug bounty ecosystems, abundant intelligence sounds like a good thing. More findings. more coverage and more visibility. But abundance changes value.

When discovery is no longer the constraint, the bottleneck shifts: Human work becomes the constraint, which creates pressure to automate more decisions, reduce human involvement and optimize for throughput over understanding.

The Illusion of Democratization

As Moussouris pointed out, bug bounties were once framed as a democratizing force in security. Anyone, anywhere, could contribute. But as automation scales, she said, the playing field changes.

Those with access to better models, tooling, and infrastructure gain an advantage. Platforms become gatekeepers of incentives. Individual researchers compete not just with each other, but with systems.

Decisions Faster Than Institutions

Decisions are being made faster than institutions can respond. Security teams are already feeling this.

- AI-generated vulnerabilities appear faster than patch cycles

- Exploits evolve faster than detection rules

- Findings accumulate faster than teams can triage

Now zoom out beyond security. What happens when financial systems optimize faster than regulators can react, labor markets shift faster than policy can adapt and critical infrastructure decisions are made by systems no one fully understands?

Cybersecurity is just the preview.

You’ll Adapt, But To What?

Of course, Moussouris said, humans will adapt. We always have. She articulated it with song, which I'll share here as soon as it appears on YouTube.

But what are we adapting to? A world where human expertise is sidelined? A system optimized for efficiency at the expense of resilience? An economy where leverage shifts from individuals to platforms and models?

Cybersecurity has always been about anticipating what others miss. Right now, it may be previewing a world where power concentrates around optimization systems and the rest of us are left trying to keep up.

Moussouris believes there's still time for humans to shape the outcome into something better and more democratic. To that end, her advice is simple: Show up.

Show up to state AI hearings. Participate in standards discussions. Engage where AI policy is being shaped in real time.

In other words, be in the room where it happens, as much as possible.